Artificial intelligence surrounds daily routines more than most realize. Smartphones suggest replies to messages, streaming services recommend shows based on viewing habits, and navigation apps predict traffic patterns to suggest faster routes. At its core, artificial intelligence refers to systems that perform tasks requiring human-like reasoning, such as recognizing speech, identifying objects in photos, or playing complex games. These systems rely on large amounts of data, algorithms, and computing power rather than magic or consciousness. Early efforts date back to the 1950s, when researchers first explored machines that could simulate aspects of human thought. Decades of progress in processing speed and data storage turned those ideas into practical tools used by millions today.

Machine learning forms the foundation of most modern artificial intelligence applications. Instead of programmers writing explicit rules for every scenario, these systems learn patterns from examples. Feed a program thousands of labeled cat photos, and it gradually identifies features like whiskers, ears, and fur texture to recognize cats in new images. Deep learning takes this further by using layered neural networks modeled loosely on the human brain. Each layer processes information and passes refined insights to the next, allowing the system to handle increasingly abstract concepts. This approach powers voice assistants that understand accents and image tools that detect subtle details in medical scans.

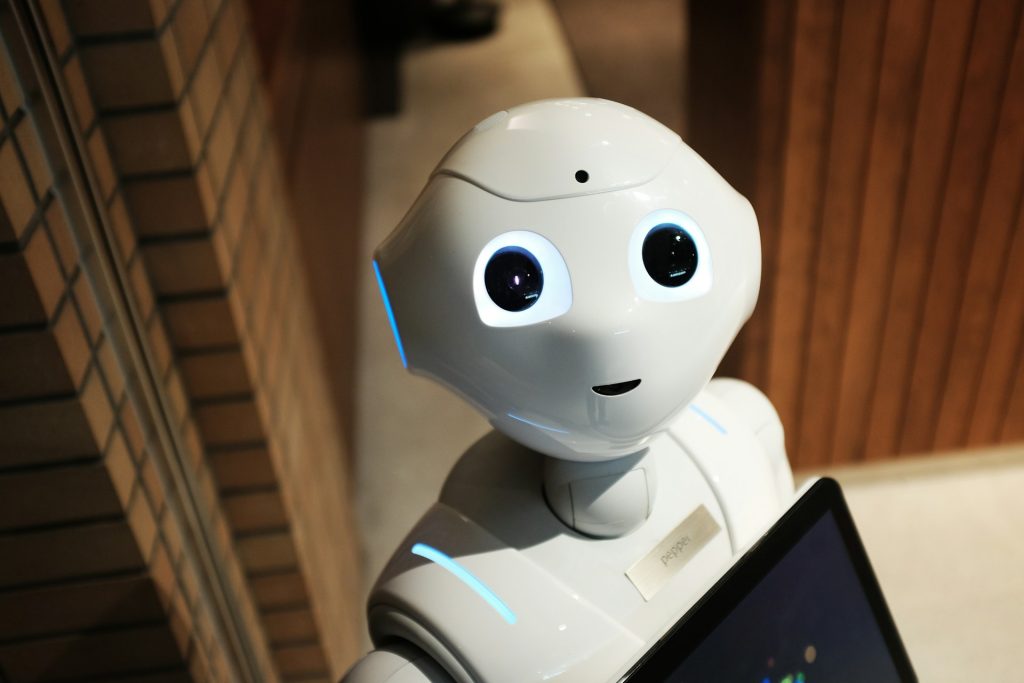

Everyday examples illustrate how artificial intelligence integrates seamlessly into life. Virtual assistants respond to spoken commands, translation apps convert text between languages almost instantly, and recommendation engines shape online shopping and entertainment choices. These tools improve with use because they adapt based on interactions and feedback. Behind the scenes, companies collect anonymized data to refine models, balancing convenience against privacy considerations. Users benefit from personalized experiences while developers work to make systems more accurate and efficient over time.

Questions about artificial intelligence often center on its limits and future direction. Current systems excel at narrow, specific tasks but lack general understanding or true creativity. They follow patterns in training data without grasping context the way people do. Researchers continue exploring ways to expand capabilities, yet progress depends on ethical frameworks, regulatory oversight, and public input. For beginners, the key takeaway remains straightforward: artificial intelligence represents powerful pattern-recognition technology that augments human abilities rather than replacing them entirely. Familiarity with basic concepts helps navigate an increasingly tech-driven world with greater confidence.